I said yesterday that the differences I’m seeing in night shots, compared to my outgoing iPhone 11 Pro, are subtle. But there is one feature that is not only new to this year, but found only on the two Pro models: Night mode portraits. That’s something I wouldn’t get with the iPhone 12 mini.

A test on Friday night quickly revealed the main drawback to these: both photographer and subject have to keep very still. But I was impressed enough to want to experiment further, so last night I headed out into the darkness with a volunteer to see what night portrait results could be achieved with a little care…

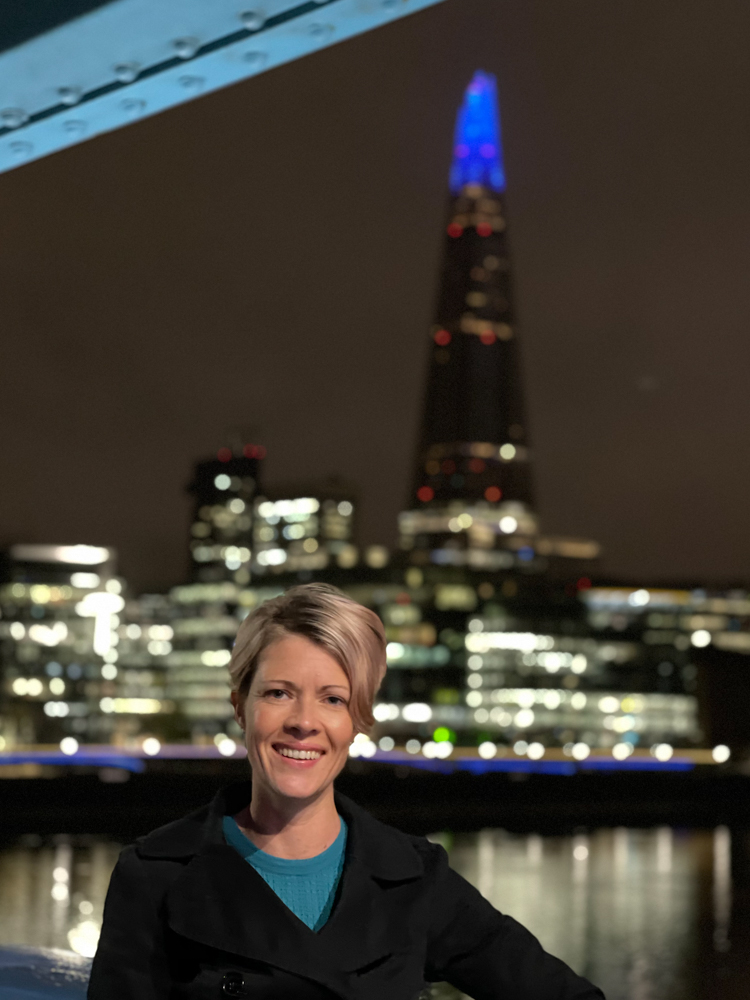

The answer to the question is: really good ones. As before, all photos are straight from camera, with zero editing other than crops and resizing for web.

I should say, some of the compositions may look a little odd. I wanted to show as much background as possible to fully illustrate the artificial bokeh created by the camera, hence the unusual framing in some shots.

All the photos were lit by nothing more than street lamps (and even less than that in two cases), yet they look as brightly lit as a studio shot. Considering that exposure times ranged from 1/13th of a second to 1/25th of a second, and the highest ISO I saw was 1,000, that’s truly incredible.

The LiDAR scanner clearly works: The cutouts appear technically perfect (though more on this later), and auto-focus using automatic face detection was lightning fast.

So, enough preamble: Let’s take a look at the photos…

Although this first one doesn’t have the more dramatic city background seen in most of the other shots, it’s the perfect example to showcase what Night mode portraits can deliver in the right conditions.

Caroline is lit by a very warm street lamp, and the same street lamps continue off down the street behind her, and are also reflected in the wet stone. That consistent color temperature across foreground, midground, and background means that the photo has a very natural appearance. It’s honestly hard to see here that the bokeh is artificial.

For most city shots, there’s a significant color temperature difference between the lighting on the subject, and the city lights in the background. While that has nothing to do with the iPhone, when combined with the artificial bokeh, it can contribute to a sense that the person is superimposed on the background. Here’s an example of that.

Caroline is lit by a very cool streetlamp, while the background has warmer colors. There’s also nothing in the mid-ground, just foreground and distant background. The result is a much more artificial look, akin to a flash-lit shot. A non-photographer probably wouldn’t be able to tell you why, but I think they’d still feel that this shot feels much more like an artificial cutout.

(You can also see blown highlights in this shot, which I’ll come onto later.)

Here’s an example of the opposite issue: Caroline is lit by rather warm lighting through an office window, while the rainy background has cooler lighting.

You might wonder why I’m going on and on about the color temperature problem, when it’s a generic photographic challenge rather than an issue with the iPhone? It’s because I think it’s a problem Apple could solve.

Apple is using computational photography to do a whole bunch of things here. It is measuring distances to calculate the appropriate amount of bokeh to add. It is also doing its best to achieve a reasonable balance between foreground and background exposures — even when there is the kind of extreme contrast seen here. I think Apple could potentially use computational photography to resolve color temperature differences, too.

Since the phone is already separating the subject from the background, it seems to me it ought to be possible for the phone to analyze the color temperatures and then adjust the warmth of the background to achieve a better balance. (Background rather than foreground, because messing with city lights would be preferable to messing with skin tone.) That would be achieving something that cannot be achieved by conventional cameras when using available light.

But the very fact that I’m highlighting what I feel could be a next-generation improvement in Night mode portraits tells its own story: I’m so impressed by what Apple has got right here that I’m already excited by what I see as the obvious next step.

I continued to be blown away by just how much light this camera can suck in. This next photo was lit by a not particularly bright green light sculpture that’s a good 10 feet above her head, and a similar distance away. The amount of light to play with here was very little indeed – yet again, auto-focus was instant and her face appears much more brightly lit than it did in reality.

Let’s talk cutouts. In many cases, I’d say the results still look a little artificial. There’s just too sharp a contrast at the edges.

I think a photographer would fairly easily guess that this is artificial. At the same time, I also think most non-photographers wouldn’t notice. It’s not perfect, but it is very good.

When you examine the isolation itself, it’s technically perfect. This is a whole new level over the iPhone 11 Pro, and I think that’s all down to the LiDAR scanner. Look at the coat in that shot:

A black coat against a black background at the top, but the LiDAR has simply shrugged and told the camera not to worry, it’s got this.

When we take a close-up look at Night mode portraits, there’s one piece of bad news and two pieces of good news.

The bad news is that we do see softness in the images. Again, that’s not an iPhone-specific issue: It’s the inevitable result of camera and subject movement at relatively slow shutter speeds. Compare the web-res version of this photo — which appears sharp — with a larger version, where the softness is evident:

I’m not going to be creating any large prints from Night mode portraits.

But the first good news is that, honestly, that’s still phenomenally impressive. With a dedicated camera, you’d normally be using off-camera flash to freeze the subject. To get a result that looks perfectly sharp at web res using only street lighting is nothing short of amazing. I suspect the sensor-shift image stabilization on the Pro models is playing a part here: Sensors are lighter than lenses, so can react more swiftly to camera movement.

The other good news when pixel-peeping is that we can see fall-off in focus in the hair at the back of her head. That will again be down to the LiDAR, and it helps create a significantly more realistic image than the previous generation. More on this shortly.

Night portraits in general pose a big exposure challenge for any camera, because — assuming you’ve placed your subject close to a light source to minimize the noise in the image — the foreground is always much more brightly lit than the background.

I think the iPhone 12 does a remarkable job here. The only issue is blown highlights in white lights; couple this to the artificial blur, and it does sometimes result in white blobs where you have brighter lighting in the background. Here, for example, the white bridge lighting above her head is just a mass of solid white (more on this photo in a moment):

While here, the Butler’s Wharf sign on the top of the building is even worse:

Reducing the exposure enough to correctly expose the sign resulted in Caroline being reduced to gray fuzziness, so that wasn’t an option.

Even using computational photography, this is a very tough problem for Apple to solve: it’s generally easier to recover shadows than highlights, but boosting shadows on skin doesn’t generally work well. And again, none of this is a criticism of the iPhone: Some exposure challenges just can’t be overcome. The solution to the Butler’s Wharf shot is: Don’t take it.

Back to this photo, this time looking at the artificial bokeh:

The sculpture is about 10 feet behind her, the low wall maybe 20 feet, the fence perhaps 40 feet, and the bridge several hundred feet. I think the iPhone has done a really good job of applying an appropriate amount of blur to each, and the following examples just get better.

In this one, compare the blur applied to the barrel, then the hanging yellow lights on the right, and finally the distant wall at the back:

Every layer has been treated differently and realistically.

For the final shot, I wanted to give the iPhone the ultimate bokeh challenge! I chose a very layered scene. Layer one is Caroline. Layer two is the fencing, which is right behind her in the foreground, but the angle then puts it progressively further away – so really this is layer two through two-point-nine. Layer three is the boat; layer four, Tower Bridge (though as the right tower is further away at this angle, that’s really layers four and four-point-five); layer five, the City buildings behind it.

So, how well did the iPhone cope?

The answer is: absolutely superbly!

The amount of blur applied at each of the distances is completely realistic, and the way the focus falls away on the fencing itself is a work of art. The shot really feels three-dimensional, and I honestly don’t think I would have guessed this was all artificial bokeh. I was blown away when I saw the result: This is breathtakingly good.

More than that, it’s almost magical. Ok, the LiDAR can tell how far away Caroline is, and the various parts of the fence, and the boat. But the two towers of the bridge? Bouncing a low-powered laser off those is impressive. And the City, which is like a mile away? It seems impossible to get a return from that distance using only a tiny scanner fitted inside a smartphone. Yet here we are, with this degree of layering. I’m truly astonished.

If you want to get a sense of how insanely good Night mode portraits are, take a look at the photo below, which I took almost exactly 10 years ago. That took a professional DSLR, two flashguns, three radio transceivers, two gels, two soft boxes, a lightstand, and about half an hour of setup; today, I can get close to the same result in about 20 seconds with an iPhone and a streetlight… That’s nuts.

Updated conclusions after night mode portraits

So, where am I with my decision?

Prior to this shoot, I was still very much wavering. I was satisfied that the iPhone 12 Pro Max takes better photos than the mini, and the improvement over my iPhone 11 Pro was also clear under close examination. At the same time, I had to acknowledge that the gain I’d be getting over the mini was subtle.

However, Night mode portraits are a feature enabled by the LiDAR scanner, and therefore limited to the two Pro models. Which is significant to me: I do like to photograph people – my partner, and friends and family – and I do take a lot of photos at night. Having seen these results, I’m incredibly excited by this capability, and would now be very much drawn to the Pro Max even if it still felt far too big.

But another factor is also beginning to come into play here, and it was probably an inevitable one: I’m getting used to the size of the phone. On day one, it felt like a comically outsized phone; by day four, it still feels big, but no longer absurdly so. (Indeed, there is something to be said for the larger screen more generally, but that’s a topic for another piece.)

I mean, I would still kill to have this photographic performance in the super-cute iPhone 12 mini. That really is the phone I’ve been asking for ever since the iPhone X, and it does seem from reviews that it’s every bit as good as I’d hoped.

But, given the choice between pocketability and photographic capability, I am now very strongly favoring the latter.

My decision isn’t yet 100% made for one reason: I’m conscious I’m making this decision in winter, when I always have a jacket pocket. In summer, wearing shirt sleeves will mean putting the phone in a bag, and that could feel like a hassle. OK, in Britain, that’s like a month or two per year at best, but there’s travel, too (you know, when that’s a thing again).

So my final sanity check is to check that: Carry it in a bag next time I’m out, and see whether than proves too irritating, especially at a time when I’m still going to be taking a lot of photos. But right now, I suspect my decision is made.

What about you? If you’ve played with Night portrait mode yourself, what are your impressions? If you haven’t, what do you think based on what you’ve seen here? Please share your thoughts in the comments.

Looking to trade in your iPhone/upgrade to iPhone 13?

FTC: We use income earning auto affiliate links. More.

Comments